By Lilian Nassi-Calò

Photo: Kristoffer Trolle.

Science advances based on the knowledge acquired in previous studies and the reliability and reproducibility of the results is one of the pillars of scientific research. Important conclusions are drawn based in thousands of experimental results and preclinical research results, in particular, and the development of new drugs require as initial premise that results can be confirmed by other researchers in different labs. Concerns about the research reproducibility increased mainly after the pharmaceuticals Bayer and Amgen reported not being able to reproduce much of the articles that describe chemicals as potential new drugs for the treatment of cancer and other diseases, as previously reported in this blog1-3.

Reproducibility initiative

Noting that numerous pharmaceutical companies employed researchers to validate experimental results involving new potential drugs, Elizabeth Iorns, chief-executive of Science Exchange – a service that approximates researchers and experimental work providers – has developed the “Reproducibility Project: Cancer Biology”. The initiative, created in 2013, aimed to validate 50 published articles among the most relevant and high impact on cancer research and relied, for this purpose, on US$ 1.3 million funding from a private foundation – the Arnold Foundation – supervised by the Center for Open Science and independent scientists through Science Exchange, who previously submitted to the authors of the original articles the methodology and materials used to verify the experiments. At the time, the reaction to the initiative was received with reservations by some researchers: “It’s incredibly difficult replicate cutting-edge science”, warns Peter Sorger, a biologist at Harvard Medical School in Boston, MA, USA; or very positively by others: William Gunn, co-director of the initiative said that “This project is key to solving an issue that has plagued scientific research for years”; John Ioannidis, an epidemiologist at Stanford University and a statistician, who is on the initiative’s scientific advisory board, thinks that the project can help the academic community recognize weaknesses in the experimental design of the studies, stating “A pilot like this could tell us what we could do better”; Muin Khoury, who heads the Public Health Genomics area of the Centers for Disease Control and Prevention in Atlanta, GA, USA, endorsed the idea, stating that the project “will begin to address huge gaps in the first of many translational steps from scientific discoveries to improving health”.

More than three years after the launch of the project, research results that have attempted to replicate the original results are being published, indicating that there is room to improve the reproducibility of pre-clinical studies in cancer research. To begin, it is necessary to define replication. According to Brian Nosek and Timothy Errington4, while analyzing the results of the first five reproduced studies, replicating means “independently repeating the methodology of a previous study and obtaining the same results”. However, what does “same results” mean? According to the authors, the results do not lead to a simple answer, on the contrary.

Each of the first five studies on potential drugs to treat certain types of cancer was directly replicated by independent scientists in different labs. Direct replication means that the same protocols (methodology and materials) of the primary studies have been used and have been reviewed by the original authors for the purpose of obtaining further experimental details to increase their accuracy. Experimental replication planning was peer reviewed and published prior to performing the experiments. These studies, therefore, assess whether the results of the primary studies are reproducible – if they lead to the same results as the primary studies – but they do not provide inputs to increase their validity. If replication fails, the reliability of the original study decreases, but it does not mean that the results were incorrect, because details that at first glance seemed irrelevant may indeed be crucial. If, on the other hand, replication is a success, this increases the reliability of the primary study and the likelihood of generalization of the results. It is important to keep in mind that scientific claims gain credibility by accumulating evidence, and only one replication does not allow a definitive judgment on the results of the original research. Replication studies include, in addition to the new results, meta-analyzes of the combination of results (original and replicated) to produce a statistically significant effect, contributing to increase the statistical power of the original sample. The importance of the “Reproducibility Initiative” lies in the fact that new evidence – in the event of breach of reproduction – provides important indications as to the cause of irreproducibility and the factors influencing it, as well as opportunities to improve it.

Articles containing the results of the first five replicate studies were published in early January 2017 in eLife4. The choice of the open access journal launched in 2012 by the Howard Hughes Medical Institute, Max Plank Society and the Wellcome Trust, lies primarily in the fast, efficient, and transparent peer review system adopted, which publishes selected parts of the review and decisions, with the authorization of referees and authors. Two studies reproduced important parts of the original papers, and one did not reproduce. The other two studies could not be interpreted because in vivo experiments regarding tumor growth have had unexpected behavior that made it impossible to infer any conclusions.

These first results constitute a small percentage of the total number of studies to be replicated and do not allow conclusions to be drawn as to the success of the initiative or to state how many experiments were and how many were not replicated. First of all, it is important to note that the Reproducibility initiative is per se an experiment and there is yet no data available to conclude whether it was successful. The fact that the methodology of replication studies has been pre-established, peer-reviewed, and published is both an advantage and a disadvantage, while at the same that time avoids biases, it prevents independent researchers from changing protocols when results are impossible to interpret, in particular in in vivo studies. Those responsible for the project advance that we can not expect conclusive results that are simply positive (reproducible) or negative (irreproducible), but a nuance of interpretations.

Is there a reproducibility crisis?

This behavior was somewhat expected, long before the first results came out. An online survey conducted by Nature5 with more than 1,500 researchers from all fields of knowledge and published in 2016 shows that more than 70% did not succeed in trying to replicate third-party experiments and more than 50% could not replicate their own experiments. However, only 20% of the respondents said they had been contacted by other researchers who could not reproduce their results. This matter, however, is sensitive, because there is the risk of appearing incompetent or accusatory. On the contrary, when a result cannot be reproduced, the scientists tend to assume that there is a perfectly plausible reason for the failure. In fact, 73% of respondents think that at least 50% of the results in their areas are reproducible, with physicists and chemists being among the most confidents.

The results illustrate the general sentiment on the subject, according to Arturo Casadevall, a microbiologist at Johns Hopkins Bloomberg School of Public Health in Baltimore, MD, USA, “At the current time there is no consensus on what reproducibility is or should be […] and the next step may be identifying what is the problem and to get a consensus.” The challenge is not to eliminate problems with reproducibility in published work, since being at the cutting edge of science means that sometimes results will not be robust, says Marcus Munafo, a psychologist at Bristol University, UK, who studies scientific reproducibility and considers a rite of passage failing to reproduce results, reporting on his own experience.

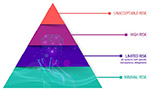

As for the cause of irreproducibility, the most common factors are related – as expected – with the intense competition and pressure to publish. Among the most cited reasons by researchers are: selective reporting; pressure to publish; low statistical power or poor analysis; not replicated enough in original lab; insufficient mentoring; unavailable methodology; poor experimental design; raw data not available from original lab; fraud; and insufficient peer review.

The survey showed that 24% of respondents reported having published successful replications and 13% reported failed replication results. In some authors’ experience, the publication of replication results can be facilitated if the article offers plausible explanation for the possible causes of success or failure. In this sense, between 24% (physics and engineering) and 41% (medical sciences) of the researchers reported that their labs have taken concrete measures to increase the reproducibility of experiments, as repeating the experiments several times, asking other labs to replicate the studies, or increase experimental standardization and description of the methodology used. What may seem like an expense of material, time and resources, can actually save future problems and retraction of published articles. According to the survey, one of the best ways to increase reproducibility is to pre-register protocols and methodologies, where the hypothesis to be tested and the work plan are previously submitted to third parties. Recalling that this was, in fact, the methodology used by the Reproducibility Initiative, where the experimental design was peer reviewed and published prior to starting replication studies. The availability of raw data in open access repositories also contributes, by preventing authors from cherry picking only the results that actually corroborate their hypothesis.

How to improve reproducibility?

Researchers surveyed by Nature have indicated several approaches to increase research reproducibility. More than 90% indicated “more robust experimental design”; “better statistics” and “more efficient mentoring”. Even pre-publication peer review was pointed out by Jan Velterop, a marine geophysicist, former scientific editor, open access advocate and a frequent contributor to the SciELO in Perspective blog, as an aggravating factor for irreproducibility in science. In fact, about 80% of respondents said to Nature that funding agencies and publishers should play a more significant role in this regard. There seems to be a consensus that the discussion on reproducibility is timely and welcome, as it makes more people bother to improve it. According to Irakli Loladze, a mathematical biologist at the Bryan College of Health Sciences in Lincoln, NE, USA, “Reproducibility is like brushing your teeth, it is good for you, but it takes time and effort. Once you learn it, it becomes a habit”.

Notes

1. NASSI-CALÒ, L. Reproducibility of research results: the tip of the iceberg [online]. SciELO in Perspective, 2014 [viewed 24 January 2017]. Available from: http://blog.scielo.org/en/2014/02/27/reproducibility-of-research-results-the-tip-of-the-iceberg/

2. NASSI-CALÒ, L. Reproducibility in research results: the challenges of attributing reliability [online]. SciELO in Perspective, 2016 [viewed 24 January 2017]. Available from: http://blog.scielo.org/en/2016/03/31/reproducibility-in-research-results-the-challenges-of-attributing-reliability/

3. VELTEROP, J. Is the reproducibility crisis exacerbated by pre-publication peer review? [online]. SciELO in Perspective, 2016 [viewed 24 January 2017]. Available from: http://blog.scielo.org/en/2016/10/20/is-the-reproducibility-crisis-exacerbated-by-pre-publication-peer-review/

4. NOSEK, B.A. and ERRINGTON, T.M. Making sense of replications. eLife [online]. 2017, vol. 6, p. e23383, ISSN: 2050-084X [viewed 24 January 2017]. DOI: 10.7554/eLife.23383. Available from: http://elifesciences.org/content/6/e23383

5. BAKER, M. Is there a reproducibility crisis? Nature [online]. 2016, vol. 533, no. 7604, p. 452 [viewed 24 January 2017]. DOI: 10.1038/533452a. Available from: http://www.nature.com/news/1-500-scientists-lift-the-lid-on-reproducibility-1.19970

References

BAKER, M. Independent labs to verify high-profile papers [online]. Nature. 2012 [viewed 24 January 2017]. DOI: 10.1038/nature.2012.11176. Available from: http://www.nature.com/news/independent-labs-to-verify-high-profile-papers-1.11176

BAKER, M. Is there a reproducibility crisis? Nature [online]. 2016, vol. 533, no. 7604, p. 452 [viewed 24 January 2017]. DOI: 10.1038/533452a. Available from: http://www.nature.com/news/1-500-scientists-lift-the-lid-on-reproducibility-1.19970

BEGLEY, C.G., ELLIS, L.M. Drug development: Raise standards for preclinical cancer research. Nature [online]. 2012, vol. 483, no. 7391, pp. 531–533 [viewed 24 January 2017]. DOI: 10.1038/483531ª. Available from: http://www.nature.com/nature/journal/v483/n7391/full/483531a.html

NASSI-CALÒ, L. Reproducibility of research results: the tip of the iceberg [online]. SciELO in Perspective, 2014 [viewed 24 January 2017]. Available from: http://blog.scielo.org/en/2014/02/27/reproducibility-of-research-results-the-tip-of-the-iceberg/

NASSI-CALÒ, L. Reproducibility in research results: the challenges of attributing reliability [online]. SciELO in Perspective, 2016 [viewed 24 January 2017]. Available from: http://blog.scielo.org/en/2016/03/31/reproducibility-in-research-results-the-challenges-of-attributing-reliability/

NOSEK, B.A. and ERRINGTON, T.M. Making sense of replications. eLife [online]. 2017, vol. 6, p. e23383, ISSN: 2050-084X [viewed 24 January 2017]. DOI: 10.7554/eLife.23383. Available from: http://elifesciences.org/content/6/e23383

PRINZ, F., SCHLANGE, T. and ASADULLAH, T. Believe it or not: how much can we rely on published data on potential drug targets? Nature Reviews Drug Discovery [online]. 2011, vol. 10, no. 712 [viewed 24 January 2017]. DOI: 10.1038/nrd3439-c1. Available from: http://www.nature.com/nrd/journal/v10/n9/full/nrd3439-c1.html

SPINAK, E. Open-Data: liquid information, democracy, innovation… the times they are a-changin’ [online]. SciELO in Perspective, 2013 [viewed 29 January 2017]. Available from: http://blog.scielo.org/en/2013/11/18/open-data-liquid-information-democracy-innovation-the-times-they-are-a-changin/

The challenges of replication. eLife [online]. 2017, vol. 6, p. e23693, ISSN: 2050-084X [viewed 24 January 2017]. DOI: 10.7554/eLife.23693. Available from: http://elifesciences.org/content/6/e23693

VAN NOORDEN, R. Initiative gets $1.3 million to verify findings of 50 high-profile cancer papers [online]. Nature News Blog, 2013 [viewed 24 January 2017]. Available from: http://blogs.nature.com/news/2013/10/initiative-gets-1-3-million-to-verify-findings-of-50-high-profile-cancer-papers.html

VELTEROP, J. Is the reproducibility crisis exacerbated by pre-publication peer review? [online]. SciELO in Perspective, 2016 [viewed 24 January 2017]. Available from: http://blog.scielo.org/en/2016/10/20/is-the-reproducibility-crisis-exacerbated-by-pre-publication-peer-review/

External Links

Laura and John Arnold Foundation – <http://www.arnoldfoundation.org/>

Reproducibility Project: Cancer Biology – <http://validation.scienceexchange.com/#/reproducibility-initiative>

Science Exchange – <http://www.scienceexchange.com/>

Welcome Trust – <http://www.wellcome.ac.uk/About-us/Policy/Spotlight-issues/Open-access/Journal/index.htm>

About Lilian Nassi-Calò

About Lilian Nassi-Calò

Lilian Nassi-Calò studied chemistry at Instituto de Química – USP, holds a doctorate in Biochemistry by the same institution and a post-doctorate as an Alexander von Humboldt fellow in Wuerzburg, Germany. After her studies, she was a professor and researcher at IQ-USP. She also worked as an industrial chemist and presently she is Coordinator of Scientific Communication at BIREME/PAHO/WHO and a collaborator of SciELO.

Translated from the original in Portuguese by Lilian Nassi-Calò.

Como citar este post [ISO 690/2010]:

Read the comment in Spanish, by Javier Santovenia Diaz:

http://blog.scielo.org/es/2017/02/08/la-evaluacion-sobre-la-reproducibilidad-de-los-resultados-de-investigacion-trae-mas-preguntas-que-respuestas/#comment-40778

Pingback: My first #AAASmtg: on Reproducibility and Open Access | Science Reverie